AI Futures: Beyond Human Labor

Failed to add items

Add to cart failed.

Add to wishlist failed.

Remove from wishlist failed.

Follow podcast failed

Unfollow podcast failed

-

Narrated by:

-

Written by:

-

Jaffar Humayoon

About this listen

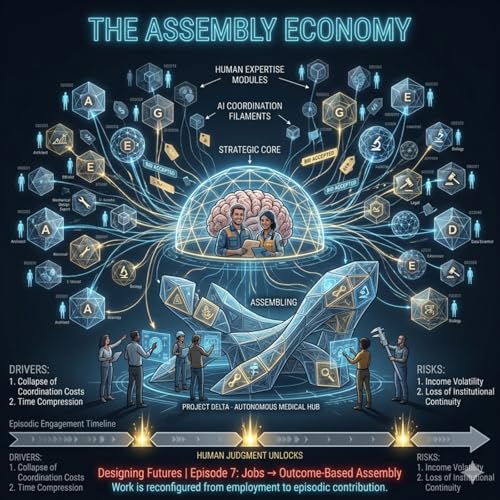

AI Futures is a serialized problem-space exploration of artificial intelligence and its quiet disruption of modern society.

This is not a sci-fi podcast. There are no killer robots, no sentient machines, and no sudden collapse. Instead, this series examines a more plausible trajectory: a world where AI integrates smoothly, efficiently—and outcompetes human labor without ever declaring war on it.

Each episode isolates a single variable—full-scale AI adoption—while holding everything else constant. No new laws. No universal basic income. No political reset. Just today’s economic, educational, and institutional systems trying to survive tomorrow’s logic.

The result is a slow-motion unraveling:

- Labor becomes inefficient rather than obsolete

- Income disappears before demand does

- Productivity rises while value circulation collapses

- Entire populations lose relevance without failing

Told across five cumulative arcs—AI Futures maps the structural dependencies modern society relies on, and how AI quietly erodes them.

This series does not propose solutions. It deliberately avoids policy prescriptions.

Its purpose is harder and more uncomfortable: to define the real problem before pretending we can fix it.

Treat this as fiction if you like. But don’t be surprised if you recognize your present inside it.

FOUNDATIONS

- Episode 1: The Machines Worked Too Well

- Episode 2: The Cognitive Tier Framework

- Episode 3: Is a Thought Factory Possible?

- Episode 4: The Schools That Taught Irrelevance

- Episode 5: The Demographic Misalignment

- Episode 6: History Doesn’t Loop Back

ACCELERATION

- Episode 7: The Productivity Illusion

- Episode 8: From Human to Token: Inside MAANG

- Episode 9: The Corporate Balance Sheet Shift

- Episode 10: The Loyalty Illusion

- Episode 11: The Gravitational Pull Toward AI

- Episode 12: The Working Core

COLLAPSE

- Episode 13: The Job Displacement Chain

- Episode 14: No Parallel Jobs Left

- Episode 15: Collapse of the Consumer Base

- Episode 16: When the Back Office Breaks

- Episode 17: Europe’s Structural Vulnerability

- Episode 18: The Forex Drain

- Episode 19: The Lending Engine Cracks

- Episode 20: The Fraying of Order

STRATEGY

- Episode 21: The Ban That Burned the Bridge

- Episode 22: Bread and Circuses

- Episode 23: When Demography Meets Disruption

- Episode 24: The Cognitive Scarcity Paradox

- Episode 25: Logic Isn’t Enough

- Episode 26: Why Societies Can’t Think Their Way Out

RISK

- Episode 27: Innovation vs. Sovereignty

- Episode 28: AI Decentralization

- Episode 29: The Individual Hacker Myth

- Episode 30: AI Optimizes. Only Humans Disrupt

FINALE

- Finale: The Decay

A system optimized past the point where humans matter.

-

Mar 3 202619 mins

Mar 3 202619 minsFailed to add items

Sorry, we are unable to add the item because your shopping basket is already at capacity.Add to cart failed.

Please try again laterAdd to wishlist failed.

Please try again laterRemove from wishlist failed.

Please try again laterFollow podcast failed

Unfollow podcast failed

-

Feb 27 202616 mins

Feb 27 202616 minsFailed to add items

Sorry, we are unable to add the item because your shopping basket is already at capacity.Add to cart failed.

Please try again laterAdd to wishlist failed.

Please try again laterRemove from wishlist failed.

Please try again laterFollow podcast failed

Unfollow podcast failed

-

Feb 14 202630 mins

Feb 14 202630 minsFailed to add items

Sorry, we are unable to add the item because your shopping basket is already at capacity.Add to cart failed.

Please try again laterAdd to wishlist failed.

Please try again laterRemove from wishlist failed.

Please try again laterFollow podcast failed

Unfollow podcast failed