Send us Fan Mail

The Metamorphosis of Computing Architecture

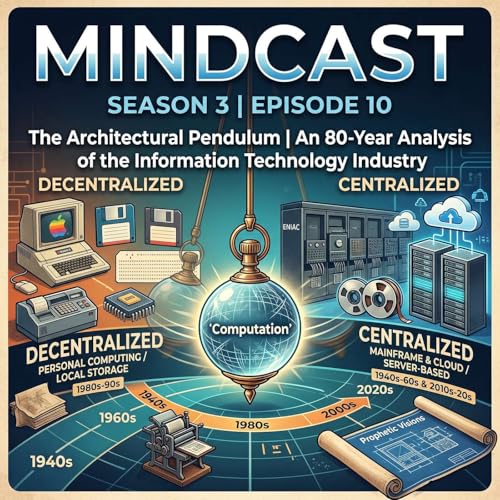

The trajectory of the Information Technology (IT) industry over the past eight decades represents one of the most profound, accelerated, and pervasive periods of technological evolution in the history of human civilisation. From the colossal, room-sized calculating engines of the 1940s to the ubiquitous, invisible infrastructure of modern hyper-scale cloud computing, the mechanisms by which humanity manages, processes, and disseminates information have undergone continuous revolution. This 80-year span is characterised not merely by the exponential increase in raw computational power, a phenomenon largely quantified and predicted by Moore’s Law, but by a violent, cyclical oscillation in underlying architectural philosophy. The industry has relentlessly swung back and forth between paradigms of centralised control and decentralised empowerment, continuously seeking the optimal balance between administrative efficiency, financial cost, security, and user autonomy.

At the very heart of this historical evolution lies a fundamental, unresolved debate regarding the optimal locus of computational processing and data storage. Early computing was strictly centralised by necessity through the mainframe computer. The advent of the microprocessor democratised computing, distributing processing power and localised storage directly to the desktop via the Personal Computer (PC). However, as local networking matured, an architectural counter-revolution emerged in the 1990s. Championed by industry titans at IBM, Oracle, and Sun Microsystems, this movement argued fiercely that the "thin client" paired with a large, centralised back-end server represented the objectively superior enterprise architecture, heavily criticising the PC's localised storage and processing model as a financial and operational failure.

Today, the total dominance of cloud computing appears, at first glance, to be a complete vindication and realisation of this centralised, thin-client vision. Yet, the modern cloud is vastly more nuanced than its predecessors, encompassing highly distributed edge networks, containerised micro-services, and elastic scalability. Simultaneously, the sheer breadth of software services and the fundamental manner in which humanity now manages information have triggered what can only be described as a "silent reformation". Much like the printing press altered the structural conditions of intellectual life and religious understanding during the Renaissance, the contemporary IT ecosystem has fundamentally rewritten the rules of commerce, communication, and human cognition. Astonishingly, the blueprints for this modern reality were not accidental; they were explicitly predicted, theorised, and mapped out by a handful of visionaries between 1945 and 1963. This podcast provides an exhaustive, granular examination of the IT industry's architectural shifts, the historic battle between local and server-based computing, and the prophetic visions that charted the course of this ongoing silent reformation.

13 mins

13 mins 26 mins

26 mins 16 mins

16 mins 17 mins

17 mins 19 mins

19 mins Apr 22 202618 mins

Apr 22 202618 mins 21 mins

21 mins 14 mins

14 mins